Whether it’s writing a student’s essay, serving up seemingly authoritative facts on anything under the sun or prompting philosophical questions about the nature of the mind, a technology mostly unknown only months ago is forcing educators to deal with it head on.

Artificial intelligence, or AI, mostly commonly encountered these days in chatbots like ChatGPT, has popped up in many sectors, but few as immediately as education.

“It’s certainly a hot topic. Everybody loves talking about it,” said Paul Dangerfield, president of Capilano University.

Some local educators, including a number of CapU faculty, see the latest AI developments as another tool for learning – like Google itself or Wikipedia or using calculators in math classes.

The university doesn’t have specific policies on use of AI, said Dangerfield, adding so far faculty haven’t felt that necessary.

AI might free people from the more tedious parts of research and allow them to concentrate on critical analysis, he said. It might even help researchers to solve pressing social issues, such as those surrounding climate change, he said.

At the same time, “How do we make sure this is not a crutch?” he said. “It’s a conversation happening around North America.”

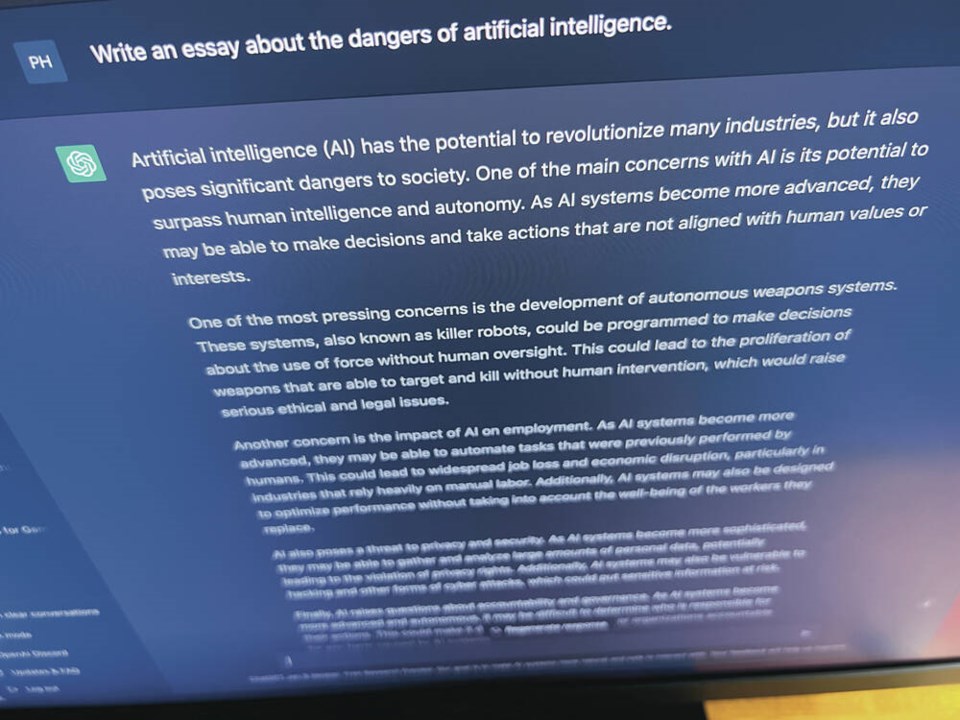

Launched last fall, ChatGPT, developed by the company Open AI, is the first free artificial intelligence bot in wide public circulation. The current AI technology gives computers the ability to understand and respond to human language, to pull answers from a massive amount of data on the web and to learn and improve over time.

New AI prompts range of reactions

It’s been reported that ChatGPT has aced law school entrance exams, for instance, as well as composed songs and poems, recommended detailed travel itineraries and debugged computer code.

“The truth is for most of us it’s still a new kind of experience,” said Sean Nosek, deputy superintendent of West Vancouver School District. “It ranges from excitement to fear to confusion and uncertainty. It speaks to the power of this tool.”

“It’s forcing all of us in education to be asking some of those deeper questions.”

So far, the school district has been encouraging staff and students to begin experimenting with ChatGPT as a tool, said Nosek.

“Teachers who have experimented with it that way see tremendous possibilities,” he said.

“It is extremely powerful.” At the same time, “It is not 100 per cent accurate.… I don’t want to suggest we’ve got this fully figured out.”

Keith Rispin is a technology and communications teacher at West Vancouver Secondary, who has dabbled in ChatGPT.

“I’ve tried it to see if it can write a blog post for me,” said Rispin. But he found the result was disappointingly devoid of any kind of human “voice” that would reflect his own writing style.

Rispin said the risk of students using ChatGPT to do their homework for them is a concern.

A few teachers he knows are getting around that by not sending work home, he said. Essays, for instance, could be written in class more often, he said.

While AI-detecting software does exist and is in use by some teachers and college professors, Rispin said there’s also AI software that deliberately tries to make AI-generated content look more like it’s been created by a person. Rispin described that as a no-win technological “arms race.”

Rispin said he remains hopeful that the technology will be harnessed by students to self-teach themselves in areas of interest – something that’s already happened with previous technological advances.

Society will have to deal with larger AI questions

Larger questions surrounding AI like ChatGPT are ones facing all of society, not just educators, he said.

Some people, including those involved in developing AI, have publicly worried about what could happen if AI manages to replicate itself and form its own thoughts and opinions with conclusions that don’t put a premium on protecting humans as a species. (Think Skynet in the Terminator movies.) Other problems could result if a “bad actor” decides to create AI for malicious purposes, said Rispin. “People who are creating AI are just that, they’re people,” he said – who come with their own biases and make mistakes.

Another danger of trusting in the chatbots is AI’s propensity to occasionally produce wildly inaccurate information, (referred to by AI researchers as “hallucinations”) serving it up as authoritative fact.

“It thinks I wrote two books,” said Rispin. (He didn’t.) And while that kind of mistake seems benign, “what if it spat out information that wasn’t very kind, wasn’t very positive?” he mused.

“Is ChatGPT making stuff up about me? There’s no way to track the information and how valid it is in many cases.”

Rispin said that highlights the necessity of teaching students (and everyone else) the need to use AI responsibly, and check information beyond the chatbot source. “If it is telling you something you cannot prove using conventional means that should not be included in something you’re pushing out to the world,” he said. “We can’t trust it to be completely truthful and factual.”